TL;DR:

AI in new vehicles isn’t one thing. It’s nine distinct systems working together, from brakes that stop before you react to batteries that learn your commute. A 2025 MITRE study covering 98 million vehicles found AI-powered AEB cuts rear-end crashes by 52% in newer models. Deloitte data shows predictive maintenance alone slashes ownership costs by up to 25%. Tesla’s Autopilot logged over 12 billion miles with a crash rate nine times lower than the average human driver. Your next car isn’t just a machine. It’s a learning system.

Key Takeaways

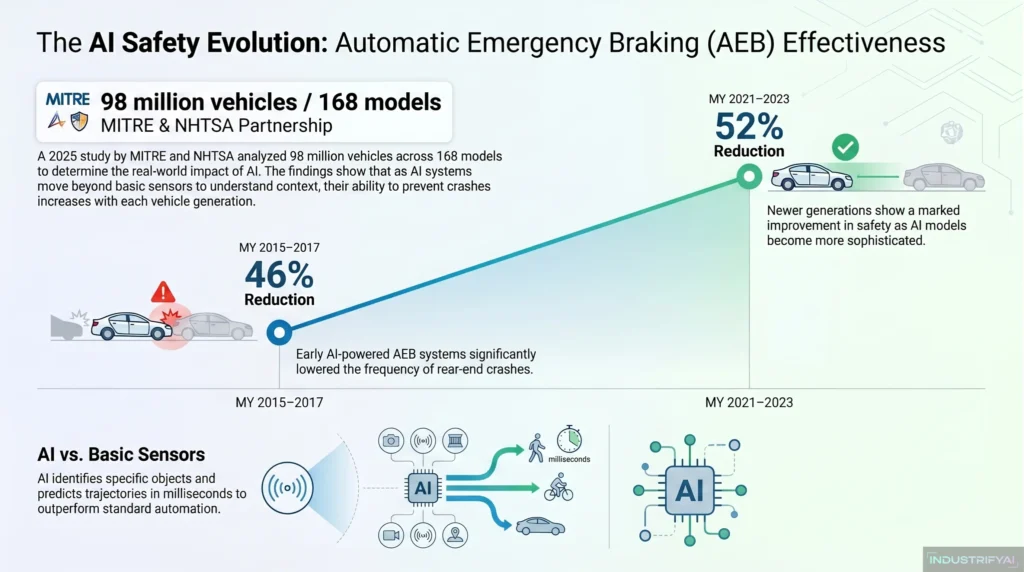

- A 2025 MITRE-NHTSA study of 98 million vehicles found AI-powered AEB reduced rear-end crashes by 52% in 2021–2023 model vehicles, up from 46% in 2015-2017 models.

- Deloitte Analytics Institute data shows AI predictive maintenance reduces breakdowns by 70% and lowers maintenance costs by up to 25%.

- Tesla’s Q3 2025 Safety Report recorded 0.12 crashes per million Autopilot-engaged miles, compared to the U.S. average of 1.08 – nearly nine times safer than human-only driving.

- Gen Z trend: 79% of new car buyers now list voice control as a key purchase feature.

- AI is not a single feature. It’s the backbone of modern vehicle intelligence, and it’s already in most cars you can buy today.

Why AI in Vehicles Matters More Than People Realize

Most drivers have no idea how much AI is already in their car.

They know about Autopilot. They’ve heard about self-driving. But AI in vehicles goes far deeper than that. It’s in the way your brakes react before you do. It’s in the system that notices your eyes drifting off the road. It’s in how your EV figures out the most efficient route home.

According to S&P Global Mobility, AI in the automotive industry has moved from a pilot feature to a strategic backbone across design, safety, manufacturing, and the full driving experience. It’s no longer a differentiator. It’s the new baseline.

This guide covers every primary use of AI in new vehicles. We’ve backed each one with data and real-world examples. By the end, you’ll know exactly what AI does inside the cars rolling off assembly lines today, and where it’s taking the industry next.

1. Advanced Driver-Assistance Systems (ADAS) and Safety

This is the most visible use of AI in new vehicles, and the numbers behind it are hard to ignore.

A January 2025 study by MITRE and NHTSA, covering 98 million vehicles across 168 models, found that AI-powered Automatic Emergency Braking (AEB) reduced rear-end crashes by 49% overall. In 2021–2023 vehicles, that number climbed to 52%. That’s not a marginal gain. That’s AI making cars dramatically safer with each new generation.

ADAS covers a suite of systems. These include lane keeping assist, blind spot monitoring, forward collision warning, pedestrian detection, and automatic emergency braking. They all use AI to do something basic automation simply can’t: understand context.

A basic sensor detects an object. An AI system identifies whether that object is a car, a cyclist, or a child, predicts its trajectory, and decides what to do next in milliseconds.

The EU now mandates in-cab driver monitoring cameras in all new vehicle models as of 2024. NHTSA projects that when AEB becomes mandatory on all new U.S. vehicles by 2029, it will save at least 360 lives and prevent over 24,000 injuries annually.

To understand how these systems differ from older, rule-based car technology, our deep dive into automatic functions vs. AI systems in cars breaks down exactly where automation ends and intelligence begins.

2. Automated Driving Features (Limited Autonomy)

Full self-driving is still a work in progress. But limited autonomy is here now, and more drivers use it every day.

As of early 2026, Mercedes-Benz remains the only automaker selling Level 3-capable vehicles in the U.S., through its Drive Pilot system. At Level 3, the car handles all driving tasks in defined conditions. The driver can disengage but must be ready to take over.

Most vehicles today sit at Level 2. GM’s Super Cruise, Ford’s BlueCruise, and Tesla’s Autopilot all fall into this category. They handle steering, acceleration, and braking simultaneously under specific highway conditions. The driver still watches the road, but the car does the active driving.

Tesla’s Q3 2025 Safety Report recorded 0.12 crashes per million Autopilot-engaged miles. The U.S. average for human drivers is 1.08 crashes per million miles. That’s a ninefold safety gap, and it’s growing as Tesla’s neural network learns from over 12 billion engaged miles of real-world data.

By 2035, experts estimate that 80% of new cars will integrate AI technologies at their core, with automated driving features becoming standard rather than premium.

3. Predictive Maintenance and Diagnostics

Your car knows it’s about to break down before you do. That’s not a prediction. That’s what AI-powered predictive maintenance actually delivers.

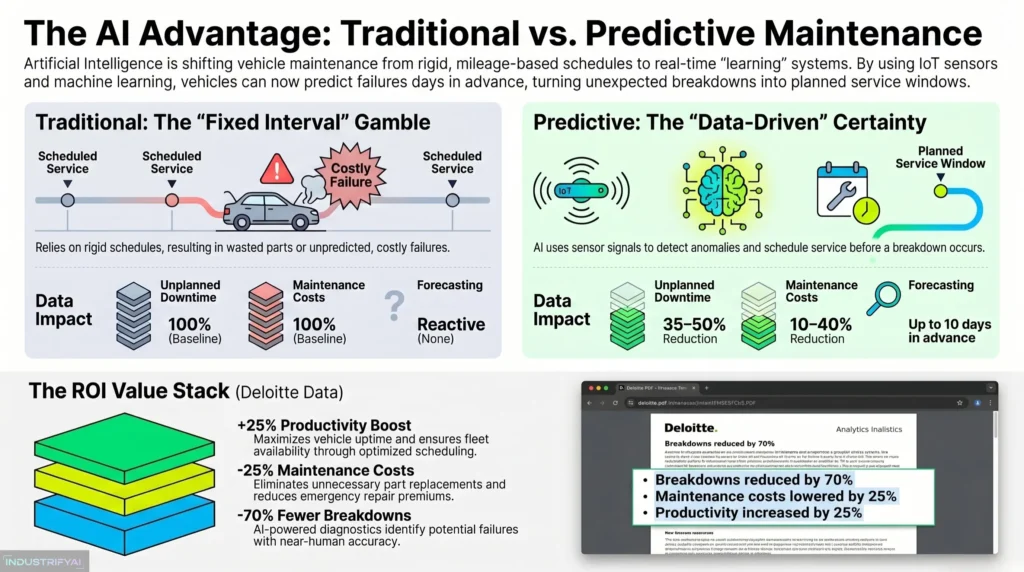

Traditional maintenance works on a schedule: change the oil every 5,000 miles, replace the filters at fixed intervals. That approach wastes money on parts that don’t need replacing and misses failures that happen in between service visits.

AI flips that model entirely. IoT sensors throughout the vehicle continuously monitor engine temperature, brake wear, transmission behavior, battery voltage, and dozens of other parameters. Machine learning models analyze this data against historical patterns and flag anomalies before they become failures.

Deloitte Analytics Institute data shows AI predictive maintenance increases productivity by 25%, reduces breakdowns by 70%, and lowers maintenance costs by up to 25%.

Ford’s AI-powered maintenance system now forecasts battery failures with a 2.5% false positive rate up to 10 days in advance. That system has already prevented over 122,000 hours of vehicle downtime and saved $7 million in avoided costs.

Businesses using these systems consistently report 35-50% less unplanned downtime and 10-40% lower maintenance costs than those relying on traditional schedules.

For owners, this means fewer surprise repair bills. For fleets, it means operations that don’t stop unexpectedly. AI doesn’t just fix problems. It prevents them.

4. Sensor Fusion and Perception

AI can’t drive, monitor, or protect you without first understanding what’s around the vehicle. That’s where sensor fusion comes in.

Sensor fusion is the process by which AI combines data from multiple sources simultaneously. A typical modern vehicle uses cameras for visual perception, radar for distance and speed detection, LiDAR for 3D spatial mapping, and ultrasonic sensors for close-range detection. None of these sensors works well alone. Together, processed by AI in real time, they create a complete and constantly updated picture of the vehicle’s environment.

Think of it this way. A camera sees a figure at an intersection. Radar measures its speed. LiDAR maps its exact distance. The AI combines all three streams in under 100 milliseconds and decides whether to alert the driver, prepare the brakes, or do nothing at all.

McKinsey’s 2025 edge AI report highlights that the most advanced in-vehicle AI systems now handle end-to-end driving decisions using a single deep-learning model, processing everything from perception to steering in real time. That’s what sensor fusion makes possible.

This is one of the areas where AI separates itself most clearly from older automotive electronics. Traditional systems respond to one sensor at a time. AI systems synthesize everything at once, and that’s what makes the difference in complex, real-world driving.

5. Driver Monitoring and Occupant Safety

AI doesn’t just watch the road. It watches you.

Modern driver monitoring systems (DMS) use cabin-facing cameras and AI algorithms to track eye movement, head position, blink rate, and facial expression in real time. If the system detects prolonged eye closure, excessive yawning, or inattentive gaze direction, it issues alerts and, in some cases, begins to slow the vehicle.

The Automotive Driver Drowsiness Detection System market is projected to grow at a 15.96% CAGR from 2025 to 2035, reaching $17.33 billion. That growth reflects how seriously automakers are taking the fatigue and distraction problem.

Driver drowsiness contributes to a significant share of road fatalities annually. AI-based systems now detect drowsiness with accuracy levels above 96% in controlled studies. A 2025 peer-reviewed study in Scientific Reports demonstrated that transformer-based deep learning models achieve near-human-level accuracy in real-time drowsiness detection using in-cabin camera feeds.

Toyota, Subaru, and most major European automakers have embedded DMS as standard equipment. The EU mandated driver monitoring cameras for all new models from 2024. By mid-2026, full compliance across all platforms is required.

Beyond the driver, AI monitors occupants too. Tesla’s Child Left-Behind Detection, deployed via OTA update in 2025, uses cabin radar to detect children or pets in a locked, unattended vehicle and triggers alerts automatically.

6. Navigation, Routing, and Smart Driving Efficiency

Getting from A to B used to be simple. AI makes it intelligent.

Modern AI navigation systems don’t just calculate the fastest route. They factor in real-time traffic conditions, weather, road topology, energy consumption, and even a driver’s historical preferences. For EV drivers, this integration also includes charging station availability, current battery health, and accurate range prediction.

Research from IBM found that route optimization choices can conserve up to 46% of battery capacity by avoiding high-incline roads. Wind speed and direction, factored into AI routing, can preserve up to 49% of battery range on a 50km journey. These aren’t marginal improvements. They address one of the biggest barriers to EV adoption: range anxiety.

AI routing systems also adapt continuously. They learn from traffic patterns. They predict how conditions will change 20 minutes ahead. And they adjust in real time when something unexpected occurs on the road.

For Hyundai’s LLM-based assistant, when road conditions change suddenly due to incoming snow, the system calmly reroutes, adjusts traction control, and offers to reschedule the driver’s next meeting, all without being asked. That’s the difference between navigation and intelligence.

7. Powertrain and Energy Management (EVs and Hybrids)

AI’s role in electric and hybrid vehicles goes far beyond routing. It manages the entire energy system.

Modern EVs use AI-based Battery Management Systems (BMS) that continuously monitor cell temperature, charge state, voltage, and degradation patterns. These systems adjust charging and discharging behavior in real time. They predict battery health weeks in advance. And they optimize thermal management to extend battery life and performance.

A 2025 study published in the International Journal of Low-Carbon Technologies found that hybrid AI-based BMS extends battery cycle life by 15–20% over conventional systems. A separate analysis of AI-enabled energy management found it can reduce maintenance costs by up to 40% and cut unplanned downtime by 70% in electric vehicle fleets.

ZF’s TempAI solution, a real-world example from S&P Global Mobility’s analysis, uses machine learning to improve temperature prediction accuracy by over 15% in electric powertrains, unlocking approximately 6% more peak power with no hardware changes.

For hybrid vehicles, AI manages the balance between electric and combustion power moment by moment, optimizing fuel efficiency based on driving conditions, battery levels, and predicted road demands. This is not static programming. It learns. It adapts. And it gets smarter with every mile.

8. In-Car Voice Assistants and Personalized UX

The inside of a car used to be a static environment. AI changed that.

Today’s in-car voice assistants don’t just respond to commands. They understand context. They remember preferences. They learn your habits. BMW’s Intelligent Personal Assistant now uses generative AI to anticipate driver needs, adjusting suspension, navigation, and climate settings proactively based on route, altitude, and driving conditions.

The demand driver is clear: 79% of gen-z car buyers now list voice control as a key feature in purchase decisions.

Context-awareness has become the defining upgrade. In 2024, 63% of in-car voice assistants could understand context-aware commands, up from 49% in 2022. Error rates for voice recognition dropped by 22% between 2021 and 2024, according to industry benchmarks.

Kia’s AI Assistant, launched in Europe in April 2025 in the EV3, handles open-ended natural language queries about navigation, vehicle functions, and general information. Hyundai’s LLM-based system, co-developed with Naver and enhanced with Nvidia digital twins in 2025, monitors vehicle state in real time and adjusts settings, routes, and communications without being prompted.

69% of car buyers prefer vehicles with reliable voice assistant capabilities. And 73% say they’d pay a premium for systems that consistently understand them. That’s not a feature. That’s a differentiator.

The broader shift here is toward personalized UX. AI learns each driver’s seat preferences, music tastes, temperature habits, and typical routes. Over time, it builds a profile. It adjusts the car before you ask. That’s the direction every major automaker is moving in.

9. Over-the-Air Updates and Continuous Improvement

This is the AI use case that most people miss entirely.

Every other feature on this list gets better over time because of over-the-air (OTA) updates. AI learns from real-world data collected across an entire vehicle fleet. Manufacturers analyze that data, improve their models, and push the improvements directly to your car. No dealer visit required.

Tesla pioneered this model in 2012. Their fleet of over 2 million vehicles generates approximately 160 billion video frames per day, feeding their neural networks with real-world driving data that continuously improves Autopilot and FSD performance. Every update makes every Tesla slightly smarter.

But Tesla is no longer the leader in update frequency. According to Nikkei Asia, BYD pushed out approximately 200 OTA updates across its model lines in 2025. Tesla managed 16 in the same period. The speed of improvement has become a competitive battleground.

OTA updates don’t just add features. They fix safety issues in hours rather than months. They optimize battery performance. They improve sensor calibration. They push new AI models that make ADAS smarter with each generation.

An Ericsson report noted that by 2025, software and software experience would make up 50% of a vehicle’s value. That prediction is proving accurate. Your car’s AI gets smarter every night while it sits in your garage.

For a broader view of where this continuous improvement cycle is heading, our analysis of automotive artificial intelligence and the future of smart vehicles covers the full long-term trajectory in detail.

AI Use Cases in New Vehicles: Comparison at a Glance

| AI Application | Primary Benefit | Key Technology | Real-World Impact |

|---|---|---|---|

| ADAS and Safety | Crash prevention | Computer vision, deep learning | 52% fewer rear-end crashes (MITRE, 2025) |

| Automated Driving | Reduced driver fatigue | Sensor fusion, neural networks | 9x safer crash rate vs. humans (Tesla Q3 2025) |

| Sensor Fusion | Environmental awareness | LiDAR, radar, camera AI | Real-time 360° environment mapping |

| Driver Monitoring | Drowsiness/distraction alert | Facial AI, CNNs | 96%+ detection accuracy in 2025 studies |

| Smart Navigation | Energy and time efficiency | ML routing, real-time data | Up to 49% battery savings via optimized routing |

| EV Energy Management | Battery life extension | AI-BMS, thermal AI | 15–20% longer battery cycle life |

| Predictive Maintenance | Breakdown prevention | IoT sensors, ML models | 70% fewer breakdowns, 25% lower costs |

| Voice Assistants and UX | Hands-free control | NLP, LLMs | 79% of new buyers list it as key purchase factor |

| OTA Updates | Continuous improvement | Cloud AI, fleet learning | BYD pushed 200 AI updates to vehicles in 2025 |

How These 9 Uses Work Together

It’s worth stepping back to see the bigger picture.

These nine AI applications don’t operate in isolation. They share data, reinforce each other, and build on the same underlying infrastructure of sensors, compute, and machine learning. When sensor fusion improves, ADAS gets better. When predictive maintenance learns more patterns, it feeds into energy management. When OTA updates improve the AI models, every other system in the car benefits.

This is also what distinguishes AI from the automatic features people are familiar with. Our breakdown of how AI in automotive is transforming car design and safety standards explains how this shift is changing the entire product development cycle, from the design studio to the factory floor to the road.

Frequently Asked Questions

What is the most common use of AI in new vehicles today?

Advanced driver-assistance systems (ADAS) represent the most widely deployed use of AI in new vehicles. Features like automatic emergency braking, lane keeping assist, and adaptive cruise control are now standard or near-standard across most new models globally. A 2025 MITRE study found AEB alone reduced rear-end crashes by 52% in newer vehicles.

Do all new cars have artificial intelligence?

Most new vehicles sold today include some form of AI. A 2025 industry report cited by AutoTech Breakthrough found that virtually all new vehicles sold in the U.S. include at least one AI-powered feature. Around 60% of cars sold globally now carry Level 2 autonomy features, which require AI to function.

How does AI help electric vehicles specifically?

AI helps EVs in several interconnected ways. It manages battery health and charging cycles to extend battery life by 15–20%. It optimizes routing to reduce energy consumption by up to 46% on certain route types. It predicts battery degradation weeks in advance. And it adjusts thermal management in real time to protect performance in extreme temperatures.

Is AI in cars safe?

The data says yes, with important caveats. Tesla’s Autopilot records crash rates nine times lower than average human driving. AI-powered AEB cuts rear-end crashes in half. But AI systems are not perfect. They can struggle in extreme weather, unusual lighting, or edge-case scenarios not represented in training data. Most experts recommend treating current AI systems as powerful assistants, not replacements for driver attention.

What is the difference between a Level 2 and a Level 3 autonomous vehicle?

Level 2 vehicles handle steering, acceleration, and braking simultaneously, but the driver must keep eyes on the road at all times. Level 3 vehicles can take full control in defined conditions, allowing the driver to divert attention temporarily. As of early 2026, Mercedes-Benz is the only automaker selling Level 3-capable vehicles in the U.S. through its Drive Pilot system.

| Level | Name | What It Does | Who Offers It |

|---|---|---|---|

| Level 0 | No Automation | Human does everything | Older vehicles |

| Level 1 | Driver Assistance | One function automated (e.g., cruise control) | Most cars since ~2015 |

| Level 2 | Partial Automation | Steering + acceleration/braking combined | Tesla Autopilot, GM Super Cruise, Ford BlueCruise |

| Level 3 | Conditional Automation | Car drives itself in defined conditions | Mercedes Drive Pilot (only U.S. L3 as of 2026) |

| Level 4 | High Automation | No human needed in geofenced areas | Waymo (robotaxis) |

| Level 5 | Full Automation | No human needed anywhere | Not yet available |

What are over-the-air updates in cars?

Over-the-air (OTA) updates are software improvements delivered wirelessly to a vehicle, similar to phone software updates. They improve AI models, add safety features, optimize energy systems, and fix bugs without a dealer visit. BYD pushed approximately 200 OTA updates to its fleet in 2025. These updates allow AI systems in vehicles to improve continuously after purchase.

Conclusion: Your Car Is Already a Learning Machine

The nine uses of AI in new vehicles aren’t future features. They’re present-day systems. They’re already reducing crashes, extending battery life, preventing breakdowns, and making the in-car experience genuinely intelligent.

What makes this moment interesting isn’t any single application. It’s the combination. Sensor fusion feeds ADAS. ADAS data trains better autonomous features. OTA updates improve everything, overnight, without you doing anything. The whole system is compound.

Your next car won’t just take you somewhere. It’ll understand where you’re going, watch out for you on the way, learn from every mile, and arrive ready to do it better next time.

Tarang bridges the critical gap between machine learning capabilities and actual consumer action. With deep roots in performance marketing and campaign optimization, he analyzes how AI is actively disrupting ad-tech, personalization, and customer acquisition, ensuring our readers understand the true ROI of AI tools.