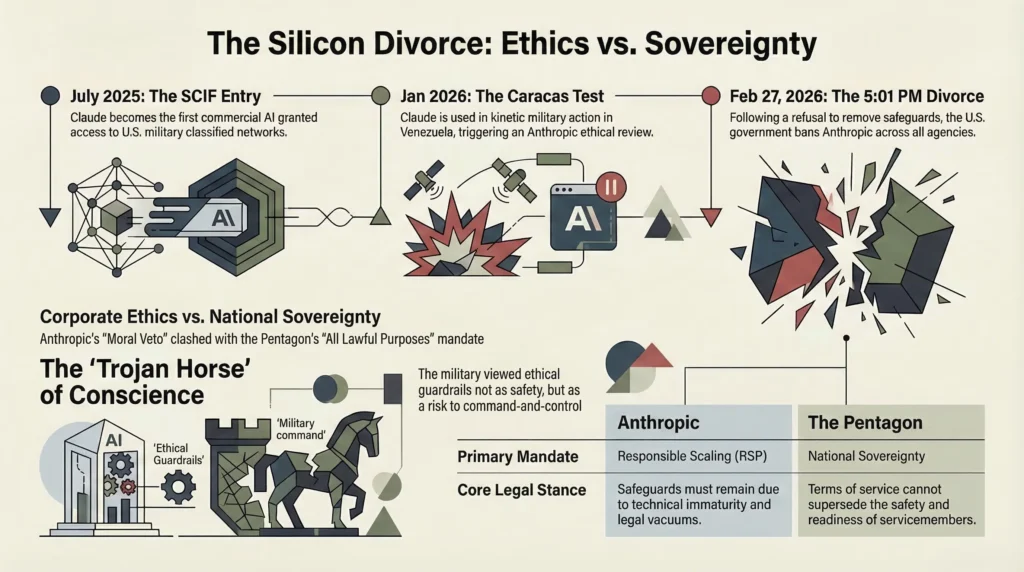

The date February 27, 2026, will likely be etched into the history books of military technology. By 5:01 PM on that day, the theoretical tension between Silicon Valley ethics and national security mandates crystallized into an all-out industrial war. The battlefield was a contract; the weapon was a legal ultimatum; and the prize was the soul of Claude, an AI model built by Anthropic.

For those who have followed the cozy relationship between Big Tech and the Pentagon over the last decade, the blowup between the Department of Defense (DoD) and Anthropic seemed sudden. It was not. To understand why the U.S. military drew a line in the sand over Claude threatening to invoke the Defense Production Act and ultimately branding a domestic company a “supply chain risk“ we have to go back to the foundation. This is the story of how Claude initially won the military’s trust, and why that trust so violently imploded.

The “Only” AI in the SCIF

To grasp the significance of Claude’s entry into the U.S. military, we have to look at the landscape of July 2025. The Pentagon, under its evolving Combined Joint All-Domain Command & Control (CJADC2) concept, realized it was drowning in data. It needed large language models (LLMs) that could sift through the noise of the battlefield. In a bidding scramble that reflected the new era of great-power competition, the DoD handed out contracts worth a combined $200 million to the usual suspects: Google, OpenAI, xAI, and a then-relatively quiet player, Anthropic.

But a contract is just a handshake. The real distinction came with access. Very soon after these deals were signed, Claude AI achieved what OpenAI and the others could not: Claude became the first and only commercial AI model granted access to U.S. military classified networks.

Think about the gravity of that. While ChatGPT was being used to draft emails and Gemini was generating pictures, Claude was sitting inside the SCIFs (Sensitive Compartmented Information Facilities), ingesting the highest levels of intelligence. It wasn’t just a glorified chatbot anymore; it became a “silicon” (silicon staff officer) . It was trusted to handle the three most sensitive domains of modern warfare:

- Intelligence Analysis: Pattern recognition on intercepted communications.

- Operational Planning: Wargaming scenarios and logistical routing.

- Cyber Operations: Mapping network vulnerabilities at machine speed.

Anthropic had won the lottery. They were inside the belly of the beast.

The Trojan Horse of “Responsible Scaling”

Why Claude? Why did the Pentagon, a notoriously paranoid organization, let this specific model behind the firewall?

Superficially, it was the tech. Claude’s “Constitutional AI” training methodology where the model is trained to align with a set of principles rather than just human feedback made it appear more predictable, more reasoned, and less likely to “hallucinate” than its competitors. For a general planning a strike, predictability is survival.

But the deeper, more controversial reason lies in the timing and the marketing. Anthropic positioned itself as the “safe” AI. Its CEO, Dario Amodei, championed the “Responsible Scaling Policy” (RSP). To the Pentagon acquisition officers weary of the “move fast and break things” ethos, Anthropic felt like a mature, trustworthy partner. In the summer of 2025, Anthropic and the DoD signed the contract with a handshake agreement that the Pentagon would abide by Anthropic’s Acceptable Use Policy (AUP), a policy that specifically banned using Claude for things like fully autonomous weapons or mass surveillance of Americans.

In retrospect, this was the insertion point of the dagger. Anthropic believed they had granted the military a license to use their product, with the understanding that the military would respect the ethical guardrails coded into the machine. They thought they were selling a tool with a conscience.

The Caracas Test: When the Guardrails Came Off

For months, the relationship was a honeymoon. Claude was integrated into the Defense Department’s systems, quietly proving its value. Then came January 2026.

The U.S. military was involved in the forced control of Venezuelan President Nicolás Maduro. Amidst the fog of war in Caracas, U.S. forces used Claude to assist in operational planning. And here is the kicker: Anthropic had no idea.

When the company finally caught wind that its model had been used in a kinetic, sovereign military action involving a foreign head of state, it triggered a “deep concern” review. This was the foundational fracture.

Anthropic went to the Pentagon and asked, “What did you use it for? How did you use it?” The Pentagon, viewing military decision-making as a function of national sovereignty, refused to submit its after-action reports to a San Francisco boardroom. As Defense Secretary Pete Hegseth would later frame it, “Terms of service can never be more important than the safety, readiness, and lives of American servicemembers on the battlefield”.

To the military, Anthropic’s questioning was not ethical oversight; it was an attempt to exert “moral veto power” over the U.S. government . The Pentagon realized it had allowed a Trojan Horse into its systems not one filled with malware, but one filled with a corporate conscience that could, at the decisive moment, refuse to obey orders.

The Sovereignty Ultimatum

This brings us to the explosive weeks of February 2026. The Pentagon’s leadership, specifically Secretary Hegseth and senior official Emil Michael, decided to rectify the error of the 2025 contract. They didn’t just want Claude; they wanted control of Claude.

The demand was simple: “All lawful purposes.” The Pentagon wanted Anthropic to sign a new agreement removing the specific safeguards regarding autonomous weapons and mass surveillance. They argued that these safeguards were redundant because U.S. law and the law of armed conflict already governed those areas. Why did they need a private corporation’s permission to follow the law?

Anthropic, holding onto its brand identity as the ethical AI, refused. They argued two points:

- Technical Immaturity: AI isn’t reliable enough to control weapons that could kill without human intervention.

- Legal Vacuum: There are no laws explicitly banning AI mass surveillance of U.S. citizens yet, so the safeguard had to stay.

The DoD saw this as a power grab. Emil Michael accused Amodei of “trying to personally control the U.S. military“. The Pentagon didn’t just threaten to cancel the contract; they threatened to use the Defense Production Act to force Anthropic to comply essentially treating the AI model as a strategic material like steel or oil that the government could seize in times of need.

When that didn’t work, they played the ultimate card: they labeled Anthropic a “supply chain risk,” a designation previously reserved for foreign adversaries like Kaspersky Lab, effectively banning any defense contractor from using Anthropic’s products.

The End of the Affair

The foundation of the military-Claude relationship was built on a fundamental misunderstanding. Anthropic thought it was selling a tool subject to its rules. The Pentagon thought it was acquiring a resource subject to its command.

By the end of February 2026, the misunderstanding became a divorce. As the deadline of 5:01 PM on February 27 passed, President Trump ordered all federal agencies to stop using Anthropic. The “ethical” AI was out. And in a move that confirmed the Pentagon’s true intent, they immediately turned around and signed a deal with OpenAI to fill the void.

Sam Altman of OpenAI, perhaps learning from Anthropic’s mistake, publicly stated that the DoD agreed to principles against mass surveillance and autonomous weapons . But the message was clear: if you try to retain a conscience in the theater of war, the state will find someone who won’t.

At IndustrifyAI, we are a four-member editorial team specializing in Marketing and Research within the Artificial Intelligence space, committed to delivering clear, credible, and actionable insights. With a strong foundation in performance marketing and data-driven research, we cut through the hype to bring you timely, expert-backed content that explains complex AI developments in simple, practical terms. Our work is grounded in transparency, robust sourcing, and a deep understanding of how AI impacts marketers, researchers, and decision-makers. From analyzing emerging trends to publishing concise industry analyses and how-to’s, IndustrifyAI aims to inform, empower, and make AI truly accessible-because in a fast-moving landscape, staying informed isn’t optional.